AI Infrastructure

> ▌

Why Opus 4.8 Pulled Me Back to Claude

Every

R&D Part 1: Here to Win

OpenAI

Deno 2.8 Quietly Fixed 3 Huge Node Problems #nodejs #programming

Better Stack

GitHub Trending Weekly #34: workshop, forkd, HRM-Text, codegraph, ELF, ASCII-Aquarium, mailflare

Github Awesome

Claude Plans, Gemini Designs: One Workflow for Beautiful Frontends (LIVE)

Cole Medin

Can We Transfer SKILLS w/ Memory to HERMES?

Discover AI

Finally! Claude Code Saves Your Model Preferences #claude #aicoding

DIY Smart Code

Vibe Coding is Serious

The PrimeTime

The GTA CEO is so based

The PrimeTime

This One File Fixed My Dev Environment (Devbox)

Better Stack

Holy sh*t I think Anthropic is profitable now

Theo - t3․gg

Codex 4.0 (CRAZY NEW UPGRADES): THIS IS INSANITY!

AICodeKing

I was wrong about GPT 5.5

Ben Davis

Code mode in the Mistral Vibe web app

Mistral AI

Custom skills and scheduling with Mistral Vibe

Mistral AI

Investment portfolio analysis with Mistral Vibe

Mistral AI

Introducing the Mistral Vibe extension for VS Code

Mistral AI

Mistral Vibe: the agent for long-horizon work

Mistral AI

Use Connectors in Workflows

Mistral AI

Use Connectors in Vibe

Mistral AI

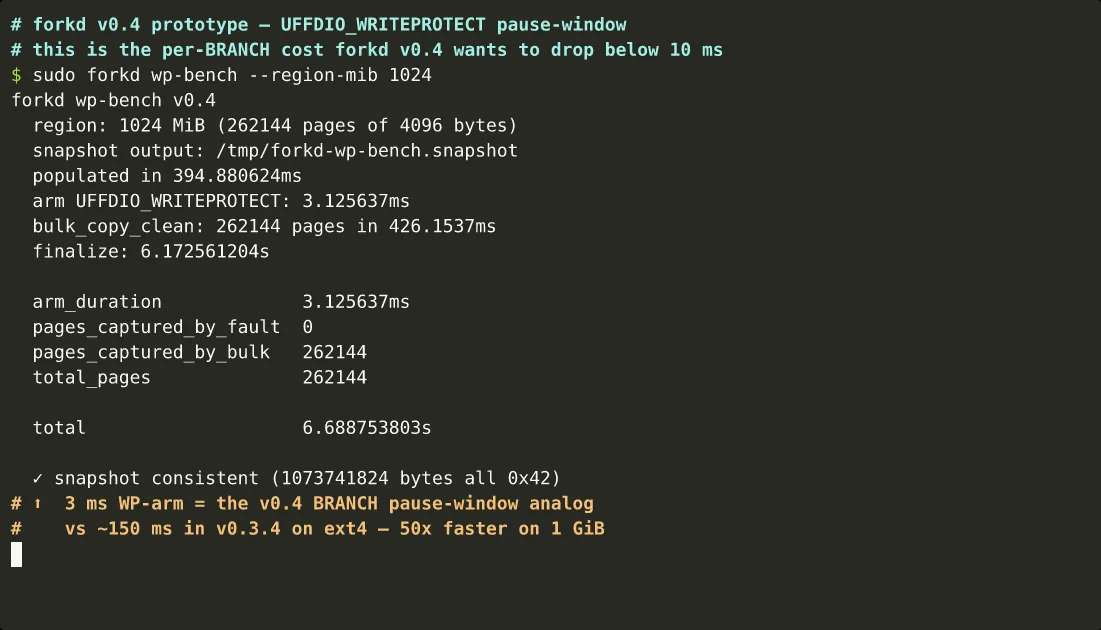

Forkd hits 101ms microVM forking

Forkd enables ultra-fast AI agent sandboxing by forking warmed Firecracker microVMs in just 101ms. It provides hardware-level isolation with copy-on-write memory efficiency for rapid agent fan-out.

Hippocratic AI hits 99.9% safety on NVIDIA Blackwell

Hippocratic AI achieved 99.9% clinical safety and a 2x prefill speedup using DigitalOcean’s NVIDIA Blackwell-powered AI-Native Cloud. The collaboration demonstrates the real-world performance gains of the HGX B300 for high-concurrency, safety-critical medical agents.

Cloudflare unveils Town Lake, Skipper AI agent

Cloudflare unveils its internal unified data platform, Town Lake, alongside Skipper, an AI agent that enables natural language queries across disparate datasets while maintaining strict governance. Built on Apache Trino and Iceberg, it solves the "data sprawl" problem that hobbles most enterprise AI initiatives.

Tailscale makes Redpoint’s 2026 InfraRed 100

Tailscale has been recognized in Redpoint’s 2026 InfraRed 100, an annual list honoring 100 of the most promising private companies in AI infrastructure. The zero-trust networking platform is cited as a foundational layer for securing distributed AI workloads and providing the essential "connective tissue" for the emerging agentic era.