AI Security

Karpathy-Skill + Claude Code,OpenCode: This SIMPLE ONE-FILE SKILL Makes YOUR AI CODER WAY BETTER!

AICodeKing · 2h ago

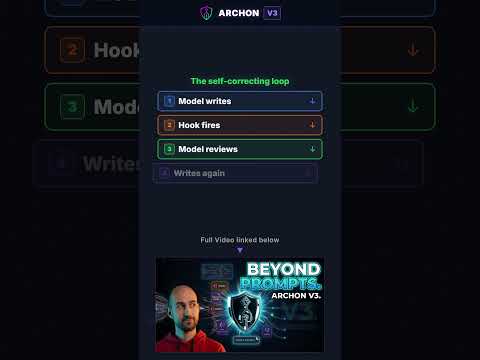

How Archon V3 catches its own mistakes

DIY Smart Code · 2h ago

AI Video Gets Unbelievably Realistic: See the Details! #shorts

AI Samson · 3h ago

Create Animated Clones: Gender Swap & YouTube Shorts Launch #shorts

AI Samson · 3h ago

Claude just unlocked the SHOGGOTH...

Wes Roth · 5h ago

Claude Mythos, Deepseek v4, HappyHorse, Meta’s new AI, realtime video games: AI NEWS

AI Search · 8h ago

Did Google Just Make The ULTIMATE Edge AI Model? (Gemma 4)

Better Stack · 9h ago

New AI Robot Is Starting to Feel Human (Artificial Humans Are Here)

AI Revolution · 12h ago

This NEW AI Coding Agent is 10x Faster Than Claude Code (100% Free)

Rob The AI Guy · 15h ago

Run S3 on Your Laptop? This Changes Everything (MinIO)

Better Stack · 17h ago

Build This Obsidian System and Ditch Closed Platforms

Eric Michaud · 19h ago

OpenCode + Gemma 4 31b = Full Apps INSTANTLY (100% FREE)

Income stream surfers · 19h ago

NVIDIA’s New AI: The Biggest Leap In Robot Learning Yet

Two Minute Papers · 19h ago

yacht problems

The PrimeTime · 20h ago

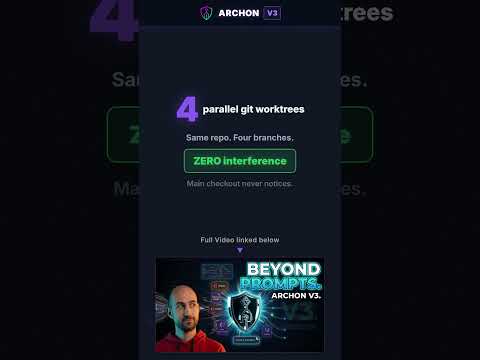

4 agents no stepping on each other - Archon V3

DIY Smart Code · 21h ago

Beyond KV-Cache: Test-Time Training & Invariant Latent Topologies for ICL

Discover AI · 22h ago

Hair Drying GPUs

The PrimeTime · 22h ago

Y Combinator CEO Garry Tan open-sourced his personal AI knowledge system

Github Awesome · 23h ago

How Top AI Engineers Structure Their Agents #aicoding #tutorial

DIY Smart Code · 1d ago

Anthropic's Managed Agents Are Different (Here's Why)

Better Stack · 1d ago

Altman home firebombed as AI anxiety peaks

OpenAI CEO Sam Altman's San Francisco home was targeted in a Molotov cocktail attack, which he linked to escalating public anxiety and hostile rhetoric surrounding AI development. The incident underscores the growing physical risks facing tech leaders as the societal impact of AI becomes a flashpoint for violence.

Molotov cocktail hits Sam Altman's home

San Francisco police arrested a 20-year-old man after he threw a Molotov cocktail at Sam Altman's residence and later threatened to burn down OpenAI’s headquarters. No injuries were reported, and property damage was minimal following quick intervention by security guards and the SFPD.

Altman home hit by Molotov, suspect eyes OpenAI

A 20-year-old man was arrested after allegedly throwing a Molotov cocktail at Sam Altman's San Francisco residence and threatening to burn down OpenAI's headquarters. No injuries were reported, and property damage to the Russian Hill home was minimal.

CPU-Z, HWMonitor links serve trojanized installers

On April 9-10, 2026, users reported that CPUID’s official download flow for CPU-Z 2.19 and HWMonitor 1.63 briefly returned a malicious installer instead of the expected files, with signs like a renamed `HWiNFO_Monitor_Setup.exe`, Russian setup text, and antivirus warnings. The incident was first surfaced on Reddit and then picked up by PC Gamer, which reported that the bad links appeared to come from a compromised download path rather than the signed binaries themselves (https://old.reddit.com/r/pcmasterrace/comments/1sh4e5l/warning_hwmonitor_163_download_on_the_official/ , https://www.pcgamer.com/software/security/cpuids-download-page-has-been-hacked-with-its-popular-processor-and-pc-info-tools-replaced-with-links-to-files-containing-malware/).

Codex safety filters overflag coding work

Users of GPT-5.3 Codex are reporting that routine development tasks are being misclassified by the product’s cyber-safety filters, triggering downgrades to GPT-5.2. The reported failures include benign changes like CSS edits being treated as high-risk activity, which suggests the safety layer is overfiring and disrupting everyday engineering workflows rather than narrowly catching genuinely dangerous requests.