agent-memory-core builds local memory that compounds

The project is a pip-installable Python memory layer that keeps storage local with ChromaDB and Ollama, then runs nightly consolidation to compress episodic chunks into durable facts. It also adds active forgetting so credentials and lessons persist while stale observations and sessions decay.

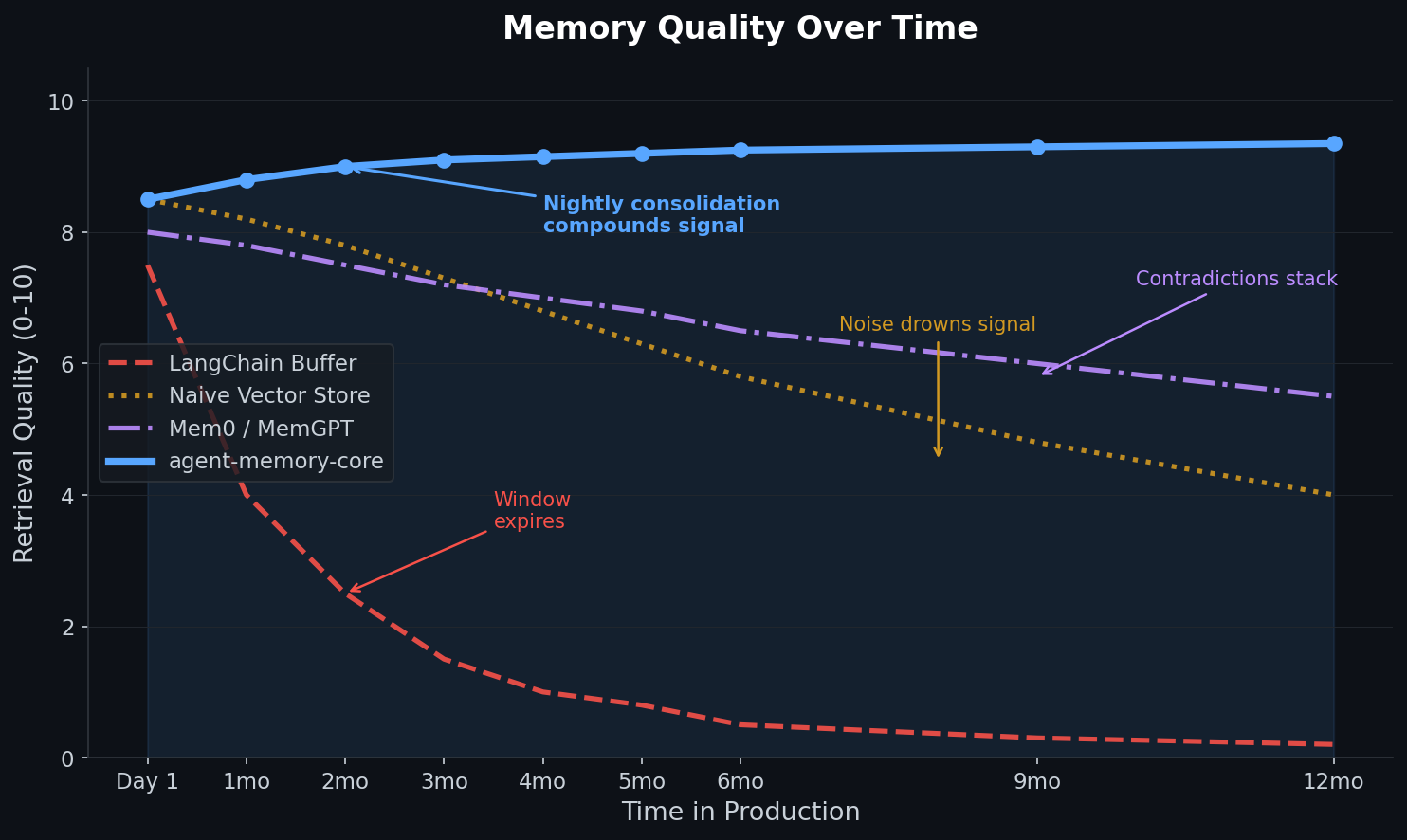

The core idea is strong: memory systems usually fail from data bloat, not just retrieval, so pruning and consolidation are the right battleground. The main question is less whether the design works in principle and more whether its clustering defaults hold up outside the author’s benchmark set.

- –A Jaccard threshold of `0.25` is a reasonable starting point for short, repetitive notes, but it should almost certainly be configurable per domain, chunk length, and memory type.

- –The current clustering priority of source/type first, then lexical overlap, then entity graph is sensible for precision, but it should be tunable because some domains will benefit from entity-first merging while others need stricter source boundaries.

- –Soft-deleting originals and decomposing consolidated output into atomic facts is the right shape for long-horizon recall, because it preserves provenance while reducing retrieval noise.

- –The benchmark numbers are impressive, but the important next step is ablation: show how much of the gain comes from consolidation, forgetting, salience scoring, and the graph layer individually.

- –If it really runs fully local with stdlib networking only, that is a practical advantage for agents that need offline operation and predictable cost.

DISCOVERED

45d ago

2026-04-17

PUBLISHED

45d ago

2026-04-17

RELEVANCE

AUTHOR

Suspicious_Milk5211