Benchmarks Pit DFlash Against Qwen Stack

The Reddit thread is a practical bake-off between two heavily optimized local-serving stacks for Qwen3.6-27B on consumer GPUs: Luce-Org/Dflash, which is llama.cpp-based with custom speculative-decoding optimizations, and noonghunna/qwen36-27b-single-3090, a vLLM-based setup with patches, AutoRound INT4, and MTP-style speculative decoding. The poster says both can be extremely fast, but results swing a lot depending on whether they prompt directly or connect through opencode, which suggests the real bottleneck is often the serving path and prompt shape, not just the backend’s headline tok/s.

Hot take: this is not a clean “which backend is faster” question so much as “which stack is faster for your exact interaction pattern.”

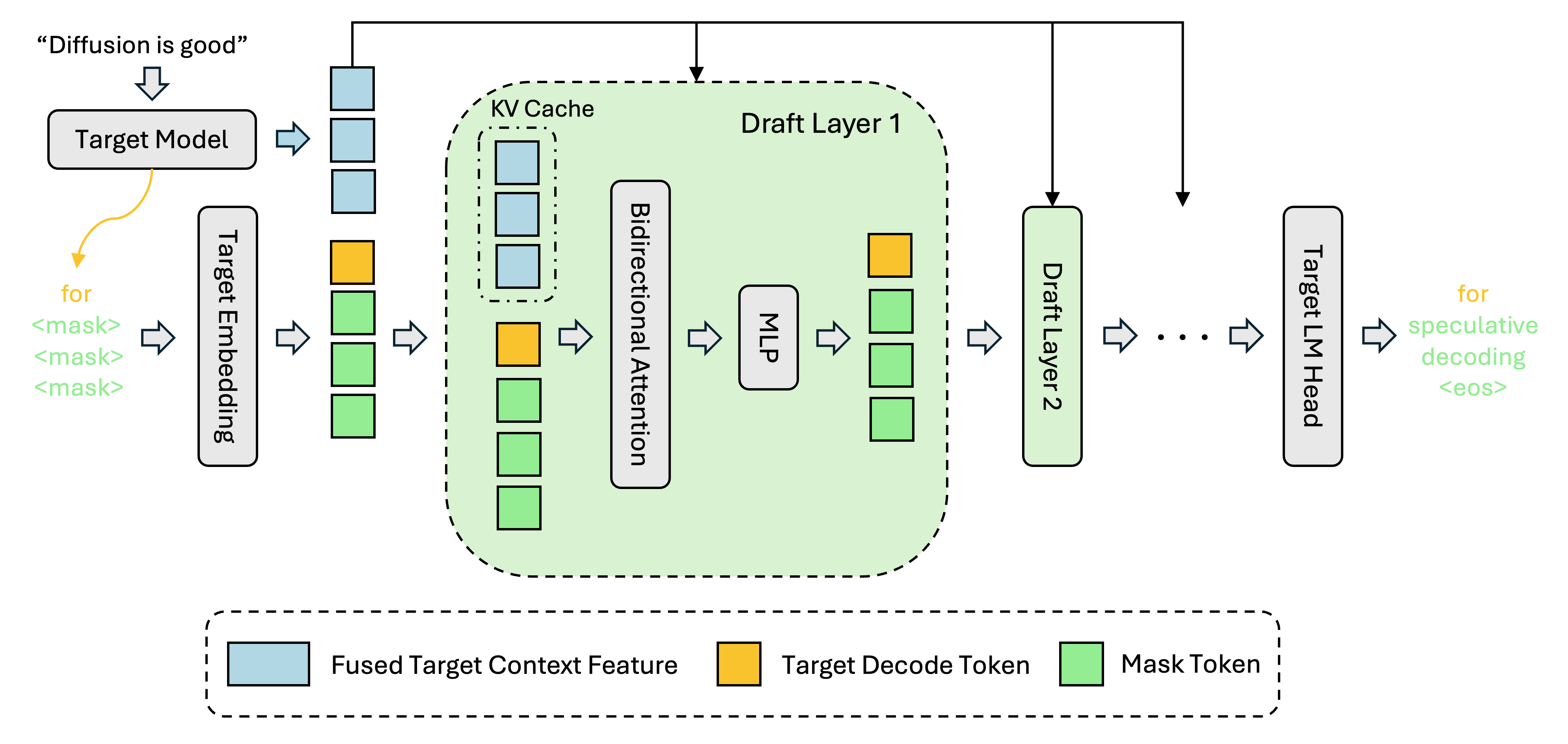

- –DFlash is explicitly built for speculative decoding and parallel drafting, so it is optimized for low-latency generation in the direct-chat path.

- –The vLLM recipe is a broader serving stack with patches, long-context support, and tool-use plumbing; the Medium write-up claims very high throughput on a single 3090, but also calls out acceptance variance and prompt-dependent cliffs.

- –The “direct prompting was super fast, opencode was slow” symptom strongly points to client-side orchestration overhead, tool-call round trips, warmup/CUDA-graph effects, and different sampling/prefill behavior, not just model inference speed.

- –For coding, the better stack is the one with the lowest end-to-end latency and the least jitter in your IDE loop; raw tok/s is a weak proxy if your agent spends time in tool calls, retries, or long-context prefill.

- –If you care about consistency, benchmark first-token latency, time-to-first-tool-call, and sustained tokens/sec separately; that is where these two systems are most likely to diverge.

DISCOVERED

3h ago

2026-04-28

PUBLISHED

4h ago

2026-04-28

RELEVANCE

AUTHOR

GodComplecs