OPEN_SOURCE ↗

REDDIT · REDDIT// 3h agoTUTORIAL

RAG-based harnesses, Markdown conversion top student LLM setups

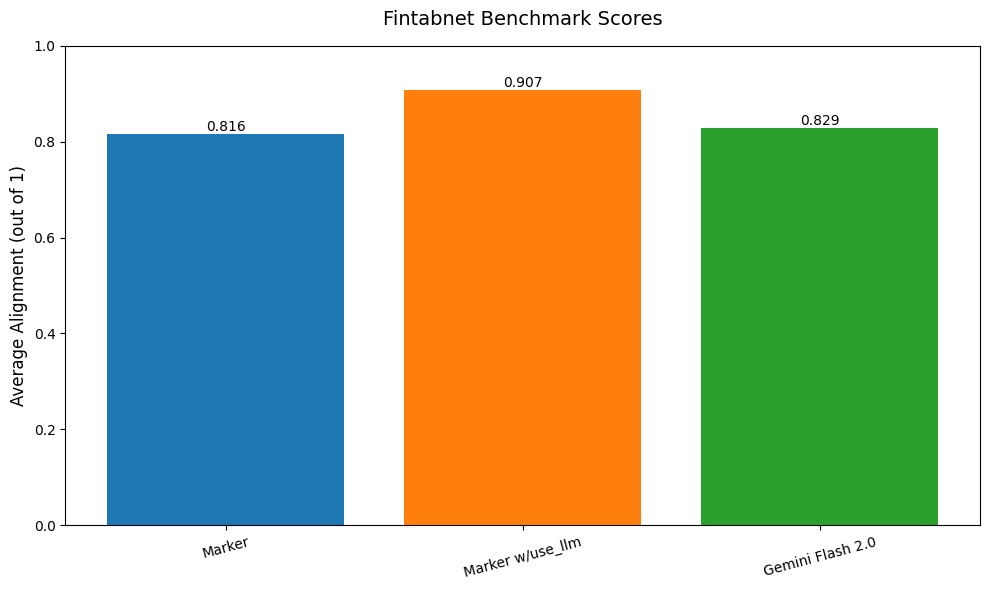

University students struggling with massive PDFs are pivoting from raw LLM prompting to RAG-based harnesses and high-fidelity Markdown conversion. Tools like Marker and MinerU are essential for stripping fluff while preserving critical tables and formulas.

// ANALYSIS

Standard chat interfaces fail on 100-page university documents because they ignore the "garbage in, garbage out" problem of raw PDF parsing.

- –Moving from 35B local models to RAG pipelines (Open WebUI, AnythingLLM) is the only way to maintain detail without hitting context limits or "middle-loss" hallucinations.

- –High-fidelity parsing via Marker or MinerU is the real secret sauce; standard text extraction misses the tables and diagrams where university exam material actually lives.

- –Qwen 2.5 Plus is a strong backbone, but it requires semantic chunking to ensure the model focuses on technical nuances rather than general summaries.

- –Local setups using DeepSeek V4 (Flash) are becoming viable for students with high-end consumer GPUs (RTX 3090/4090) due to KV cache optimizations.

// TAGS

ragllmlocal-llmpdf-parsingmarkermineruqwendeepseek

DISCOVERED

3h ago

2026-04-26

PUBLISHED

5h ago

2026-04-26

RELEVANCE

8/ 10

AUTHOR

Trovebloxian